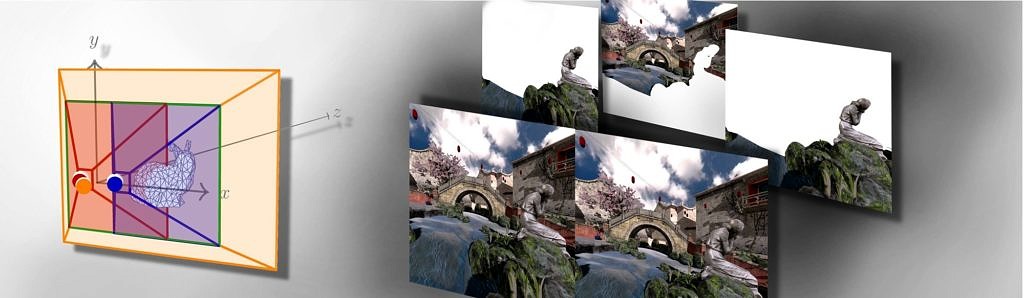

Rendering for Head Mounted Displays (HMD) causes a doubled computational effort, since serving the human stereopsis requires the creation of one image for the left and one for the right eye. The difference in this image pair, called binocular disparity, is an important cue for depth perception and the spatial arrangement of surrounding objects. Findings in the context of the human visual system (HVS) have shown that especially in the near range of an observer, binocular disparities have a high significance. But as with rising distance the disparity converges to a simple geometric shift, also the importance as depth cue exponentially declines.

In this paper, we exploit this knowledge about the human perception by rendering objects fully stereoscopic only up to a chosen distance and monoscopic, from there on. By doing so, we obtain three distinct images which are synthesized to a new hybrid stereoscopic image pair, which reasonably approximates a conventionally rendered stereoscopic image pair. The method has the potential to reduce the amount of rendered primitives easily to nearly 50% and thus, significantly lower frame times. Besides of a detailed analysis of the introduced formal error and how to deal with occurring artifacts, we evaluated the perceived quality of the VR experience during a comprehensive user study with nearly 50 participants. The results show that the perceived difference in quality between the shown image pairs was generally small. An in-depth analysis is given on how the participants reached their decisions and how the

subjectively rated their VR experience.

- , , , , , :

Hybrid Mono-Stereo Rendering in Virtual Reality

IEEE Virtual Reality (Osaka, March 23, 2019 - March 27, 2019)

DOI: 10.1109/vr.2019.8798283

BibTeX: Download